The four numbers, in plain language

For over a decade the State of DevOps Report — the DORA program — has measured tens of thousands of engineering teams against four numbers. They are the industry's gold standard for how well a software team actually ships and recovers. Ship shows them on one page so you can read your standing without commissioning a survey or hoping someone turns an exported CSV into a board chart.

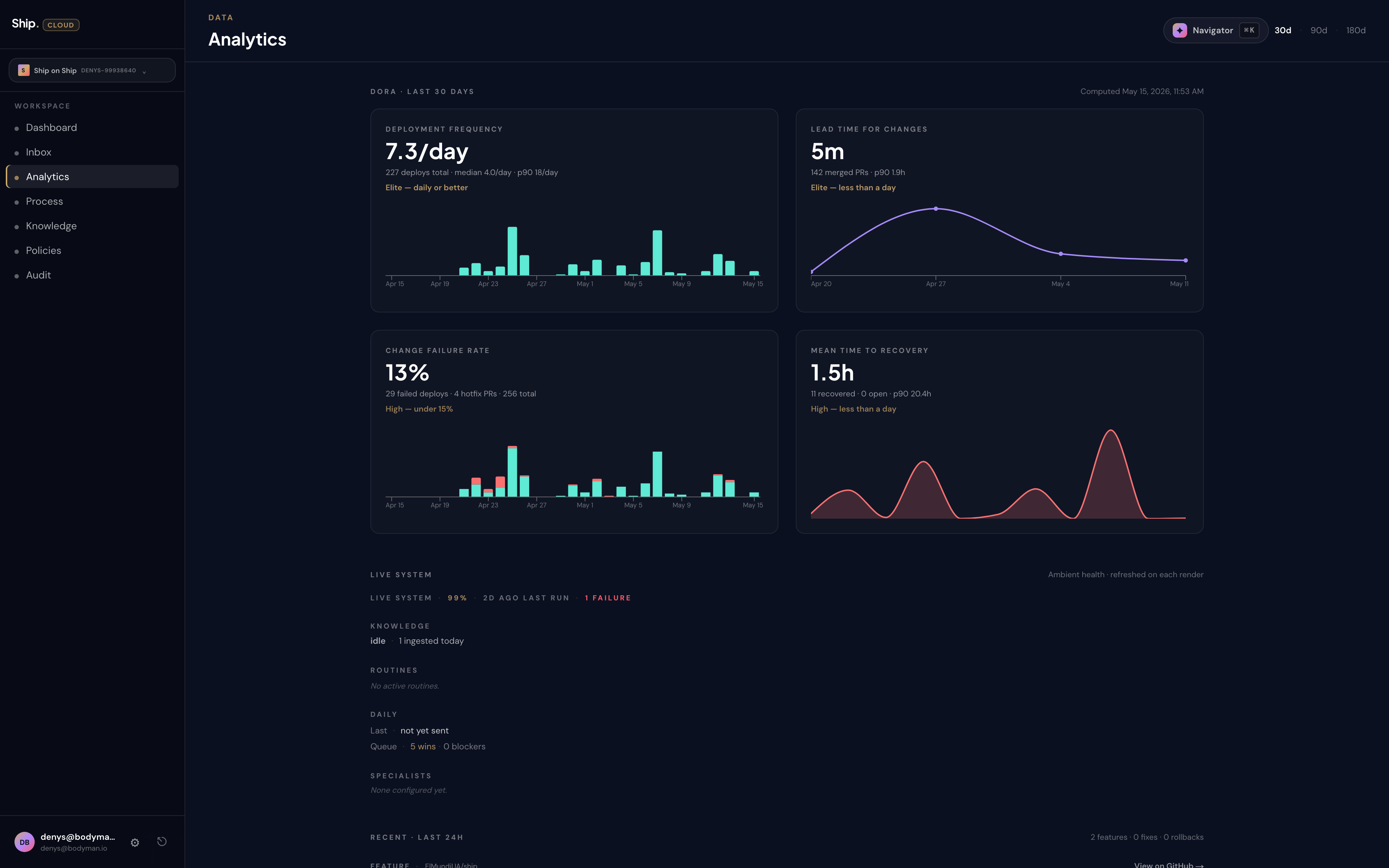

The Analytics page renders the four cards across the top, with a tier label on each. The reference workspace looked like this on the day this page was written:

- Deployment frequency: 7.3 per day — Elite

- Lead time for changes: 5 minutes — Elite

- Change failure rate: 13 percent — High

- Mean time to recovery: 1.5 hours — High

You will likely have different numbers. The shape of the page will be the same.

Deployment frequency

How often does work actually reach your users? Not how often did somebody open a pull request. Not how often did a meeting end with a "great, ship it." How often did a real change cross the line from "we made it" to "the customer can use it."

What the tiers look like. Elite teams deploy multiple times a day. High-performing teams deploy between once a day and once a week. Medium teams deploy between once a week and once a month. Low teams ship less than monthly. The reference workspace's 7.3 per day is comfortably inside Elite — small changes flowing continuously rather than monthly events.

Why this matters to you. Deployment frequency is your speed of learning. Every deployment is a chance to find out whether your last decision worked. A team deploying weekly learns about Tuesday's idea by next Tuesday. A team deploying daily learns by Wednesday. If the rest of your business is moving faster than this number, your product line is the bottleneck.

Lead time for changes

How long does an idea take, from the moment your team starts working on it to the moment a customer can use it? Elite teams get this under a day. High teams between a day and a week. Medium teams between a week and a month. Low teams take more than a month. The reference workspace's 5-minute lead time is unusually fast and is a property of small, well-bounded changes — not a property of working harder.

Why this matters to you. Lead time is your responsiveness to a market that does not wait. A competitor ships a feature on Monday; your team's lead time is the answer to "how soon could we respond?" If your lead time is in months and a competitor's is in days, you are not in the same race.

This is also the metric that punishes batching. Long lead times are usually a story about big releases — work piling up for weeks before it ships together. The way to move from Medium to High is rarely "work faster." It is ship smaller things, more often.

Change failure rate

When you ship, how often does it go wrong? An outage, a rollback, a hotfix in the same day. Elite and High teams sit between 0 and 15 percent — meaning fewer than one in six changes causes a problem worth treating as a failure. Medium and Low teams sit between 16 and 30 percent, or higher. The reference workspace's 13 percent is High tier — solidly inside the band where the process is trustworthy, with room to tighten.

Why this matters to you. Change failure rate is the credibility your team carries every time it ships. A team with a 5 percent rate has earned permission to deploy on Friday afternoon. A team with a 40 percent rate has not. This number directly governs the political latitude your engineers have when they propose a change late in the week or close to a launch — which is itself a business outcome.

A rising change failure rate, week over week, is the early warning that quality is being traded for speed. Watch the trend, not just the snapshot.

Mean time to recovery (MTTR)

When something does go wrong — and it will — how long until the system is right again? Elite teams recover in under an hour. High teams between an hour and a day. Medium teams between a day and a week. Low teams take longer than a week. The reference workspace's 1.5 hours sits in the upper High band.

Why this matters to you. MTTR is the cost of the change failure rate above. A team that fails 20 percent of the time but recovers in twenty minutes is, in practice, more reliable than a team that fails 10 percent of the time and takes three days to fix it. Customers experience MTTR. They do not experience change failure rate.

MTTR is also a property of your observability and rollback discipline — not your heroics. The teams who recover fastest are the ones who can roll a change back as easily as they shipped it. If your MTTR is slow, the conversation is usually about deployment design (small changes, feature flags, easy rollback), not about who is on call.

Reading the page

The Analytics page reads left-to-right as a story. The first two cards — deployment frequency and lead time — are your speed. The second two — change failure rate and MTTR — are your stability. Speed without stability burns trust. Stability without speed burns market position. The four numbers together tell you whether your team is moving fast in a way that compounds, or fast in a way that catches up with you the following quarter.

The page also shows the window you are reading — by default the last 30 days, switchable to 90 or 180 days. Snapshots lie about trends. Look at a 90-day window when you want to know what your team actually is. Look at a 30-day window when you want to know what changed since last month.

What to do with this

Print the four numbers somewhere your leadership sees them. Open them at the start of a quarterly review. Argue about the tier you are in, the tier you want to be in, and the one change to your process that would move you up. Most teams that move from Medium to High do it by reducing batch size. Most teams that move from High to Elite do it by automating the part of the deploy that is currently a person clicking go.

These four numbers are the conversation. The rest of Analytics is supporting evidence.